About Me

I am a machine learning researcher with a Ph.D. in computational neuroscience, specializing in models that tackle complex problems at the intersection of AI and neuroscience. I did my PhD in computational neuroscience at the Kavli Institute for Systems Neuroscience with Christian Doeller & at University College London with Caswell Barry, followed by a PostDoc with Mackenzie Mathis at EPFL in Switzerland. I am currently a researcher at the Lamarr Institute & Fraunhofer IAIS, working on improving reasoning in large language models together with Mehdi Ali.

Selected Publications

Instead of running chain-of-thought (CoT) to improve reasoning in large language models (LLMs), we do the reasoning in latent space using looped transformer models. We show that capacity and compute can be modelled via memory banks and adaptive loops across individual layers.

ICLR Latent Reasoning Workshop (2026)

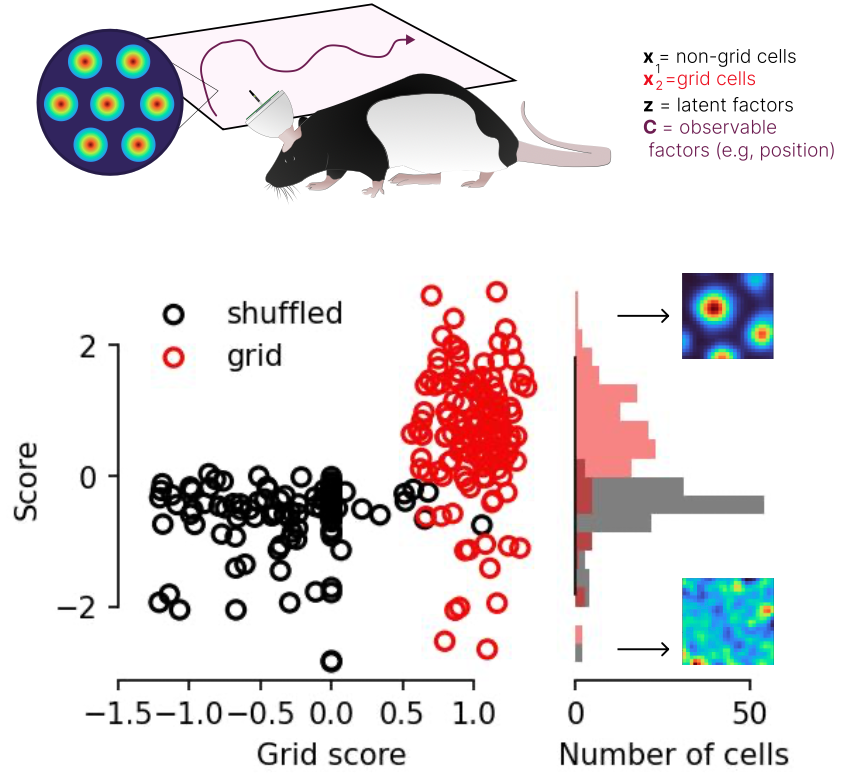

Here, Steffen developed a method for producing identifiable attribution maps in time-series data using regularized contrastive learning. I applied it to synthetic and real-world grid cell data.

NeurIPS Workshop (2024) & AISTATS (2025)

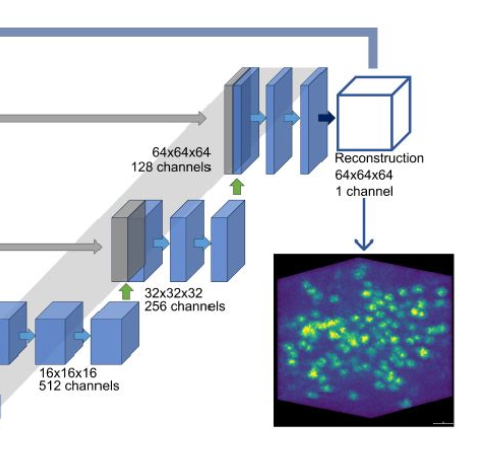

In this study, Cyril built a self-supervised pipeline for segmenting cells in 3D fluorescence microscopy volumes, reducing the need for manual annotations.

eLife (2024)

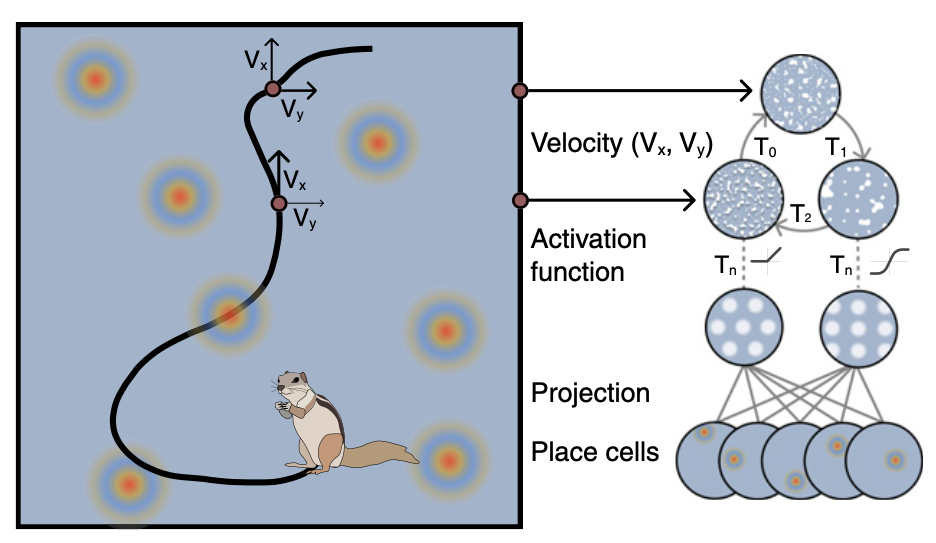

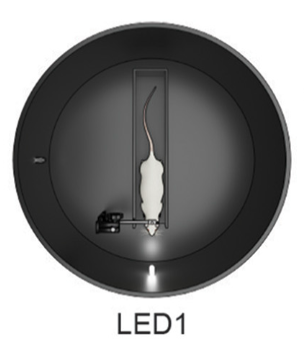

Lots of ANNs have shown grid-cell like activity in their hidden units. We ask if this is an artifact of the objective function used. Includes cute animals.

Current Biology (2023)

Here we were trying to build a neural network that simulates the egocentric to allocentric transformation circuit from visual areas to hippocampus. It works and we now also have a benchmark for ANNs that closely follows the four mountains test.

CVPR (2023)

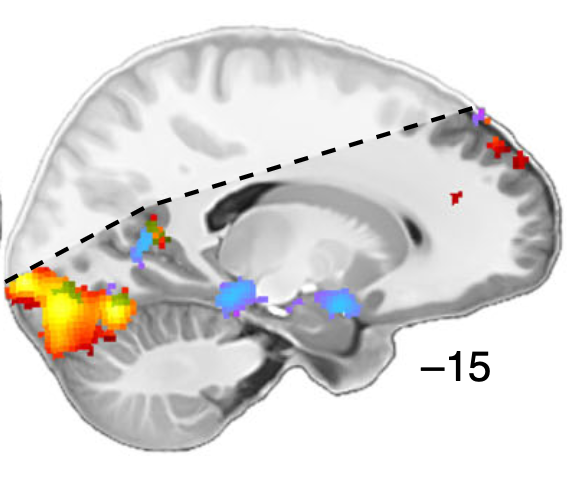

After lots of discussions in our lab meetings about the problems with eye-trackers we decided to build a deep learning framework that decodes eye position directly from MRI data.

Nature neuroscience (2021)

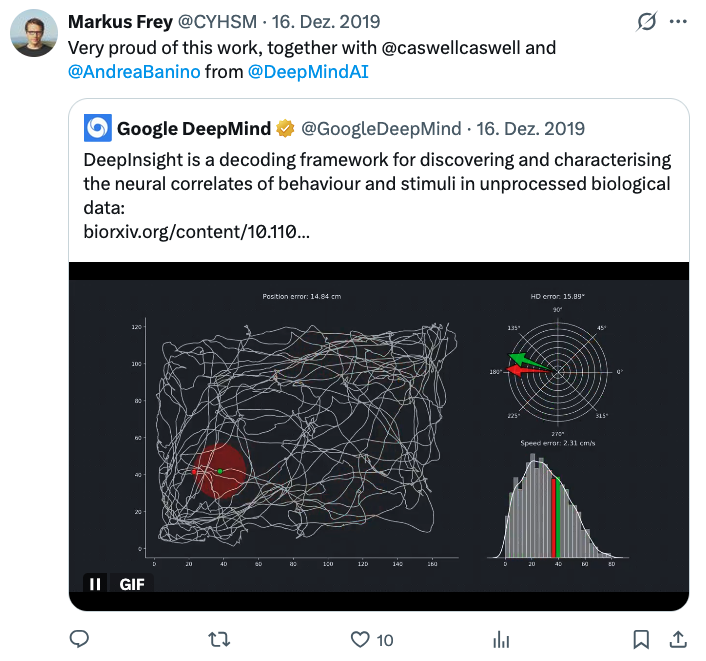

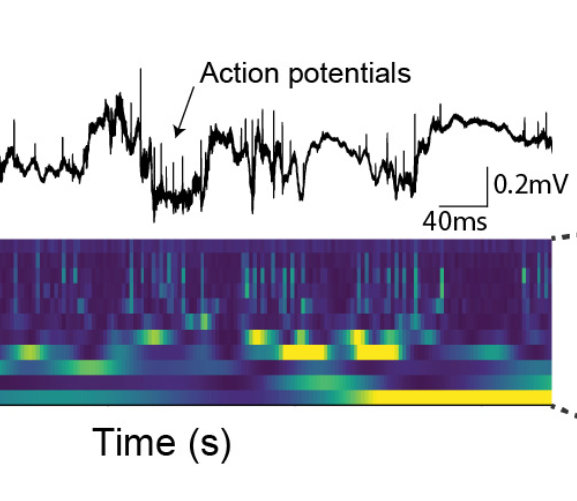

DeepInsight – nicknamed the automatic John O' Keefe – decodes behavioral variables from wide-band neural recordings. This gives more objective measures of information content, compared to spike-based methods.

Elife (2021)

In this study, Matthis showed how directional tuning in the human visual-navigation network shifts depending on behavioral context during spatial navigation.

Nature communications (2020)

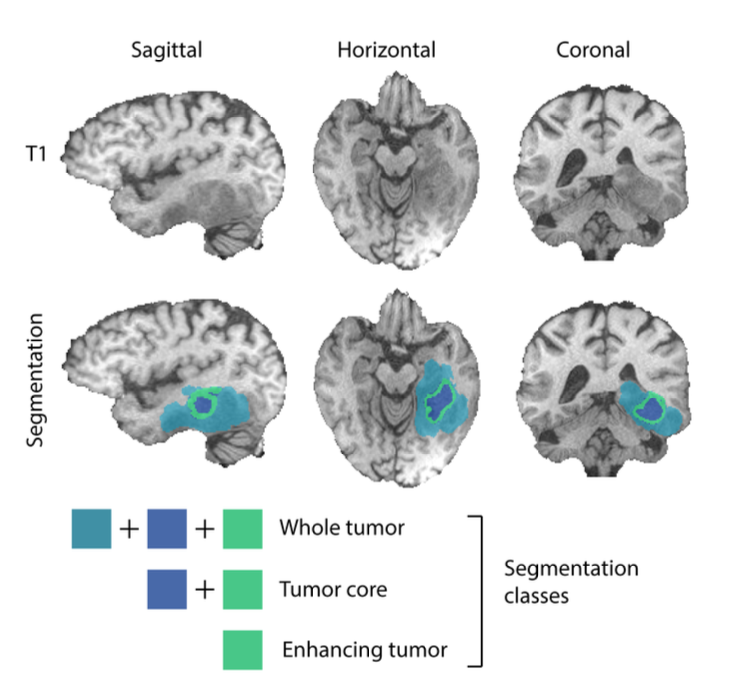

We saw this challenge and thought it would be nice to compete. Top 5 in the end but with one of the most efficient implementations and a autoencoder regularization.

MICCAI (2020)

Here we tested a setup which I introduced during my master thesis, to see if stimulating individual neurons can shift the activity of head direction cells in the presubiculum. It can!

Journal of Neuroscience (2018)Experiments

Awesome NeuroAI

A curated list of papers and reviews from the intersection of deep learning and neuroscience, which I started collecting during my PhD and update from time to time.

GitHub Repository →The Human Move

Quantifying the psychological impact of chess moves using a simple heuristic based on engine evaluations. As seen on Hackernews.

GitHub Repository →Exploring spatial representations in Visual Foundation Models

An exploration of neural representations in large vision models, with a focus on spatially selective cells that encode parts of the visual field.

Read More →The Genius Tax

Thoughts on the celebration of brilliance in academia and the unseen cost of toxic environments on junior researchers.

Read More →Timeline

Here are some posts from the last years, rescued from social media before the ecosystem collapsed.